In this lecture, we derive the maximum likelihood estimator of the parameter of an exponential distribution.

The theory needed to understand the proofs is explained in the introduction to maximum likelihood estimation (MLE).

We observe the first

terms of an IID sequence

of random variables having an exponential distribution.

A generic term of the sequence

has probability density

function

![[eq2]](/images/exponential-distribution-maximum-likelihood__4.png) where:

where:

is the support of

the distribution;

the rate parameter

is the parameter that needs to be estimated.

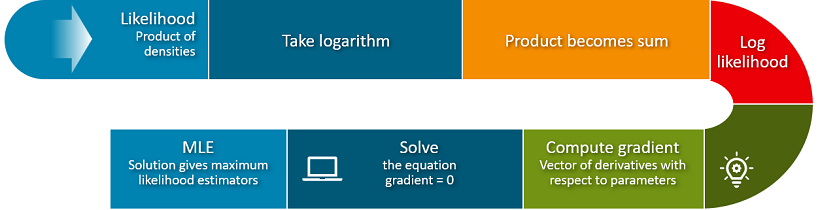

The likelihood function

is

Since the terms of the sequence are

independent, the likelihood function is equal to

the product of their

densities:Because

the observed values

can only belong to the support of the distribution, we can

write

The log-likelihood function is

This is obtained by taking the natural

logarithm of the likelihood

function:![[eq9]](/images/exponential-distribution-maximum-likelihood__12.png)

The maximum likelihood estimator of

is

The estimator is obtained as a solution of

the maximization problem

The

first order condition for a maximum is

The

derivative of the log-likelihood

is

![[eq13]](/images/exponential-distribution-maximum-likelihood__17.png) By

setting it equal to zero, we

obtain

By

setting it equal to zero, we

obtainNote

that the division by

is legitimate because exponentially distributed random variables can take on

only positive values (and strictly so with probability

1).

Therefore, the estimator

is just the reciprocal of the sample

mean

The estimator

is asymptotically normal with asymptotic mean equal to

and asymptotic variance equal

to

![[eq19]](/images/exponential-distribution-maximum-likelihood__24.png)

The score

is![[eq20]](/images/exponential-distribution-maximum-likelihood__25.png) The

Hessian

is

The

Hessian

is![[eq21]](/images/exponential-distribution-maximum-likelihood__26.png) By

the information equality, we have

that

By

the information equality, we have

that![[eq22]](/images/exponential-distribution-maximum-likelihood__27.png) Finally,

the asymptotic variance

is

Finally,

the asymptotic variance

is![[eq23]](/images/exponential-distribution-maximum-likelihood__28.png)

This means that the distribution of the maximum likelihood estimator

can be approximated by a normal distribution with mean

and variance

.

StatLect has several pages like this one. Learn how to derive the MLEs of the parameters of the following distributions and models.

| Type | Solution | |

|---|---|---|

| Normal distribution | Univariate distribution | Analytical |

| Poisson distribution | Univariate distribution | Analytical |

| T distribution | Univariate distribution | Numerical |

| Multivariate normal distribution | Multivariate distribution | Analytical |

| Normal linear regression model | Regression model | Analytical |

| Logistic classification model | Classification model | Numerical |

| Probit classification model | Classification model | Numerical |

Please cite as:

Taboga, Marco (2021). "Exponential distribution - Maximum Likelihood Estimation", Lectures on probability theory and mathematical statistics. Kindle Direct Publishing. Online appendix. https://www.statlect.com/fundamentals-of-statistics/exponential-distribution-maximum-likelihood.

Most of the learning materials found on this website are now available in a traditional textbook format.